on June 28, 2016

Table partitioning seems simple, but there’s a lot of complexity in designing and managing it if you decide to use filegroups and splitting.

When you first implement partitioning in this scenario, you decide where you’re going to keep “out of bound” data when you create your partition scheme. Be careful when you make that decision, because it may not be easy to change later.

Let me show you what I mean.

Let me show you what I mean.

Meet our LEFT based partition function

Let’s say we want to partition a table that uses the datetime data type. We want to partition on a daily basis and assign each partition to its own filegroup.

We decided we want to use a “LEFT” based partition, meaning that the boundary points dividing our partitions are an “upper” bound and the boundary point sticks with the partition to the left of it.

We don’t want the data for midnight to be in a different partition, so we do this by making the boundary point the very last moment in the day for the DATETIME data type, like this:

CREATE PARTITION FUNCTION pf_splitthis (datetime)

AS RANGE LEFT

FOR VALUES (

'2015-12-31 23:59:59.997', '2016-01-01 23:59:59.997', '2016-01-02 23:59:59.997', '2016-01-03 23:59:59.997');

GO

Yep, the precision of ye olde DATETIME datatype is such that .997 is the last moment in the day.

The partition scheme maps the data

After I create my partition function, I need to map where the data goes. I do that with this code:

CREATE PARTITION SCHEME ps_splitthis

AS PARTITION pf_splitthis

TO ([fg_splitthis_A],[fg_splitthis_B],[fg_splitthis_C],[fg_splitthis_D],[fg_outofbounds]);

GO

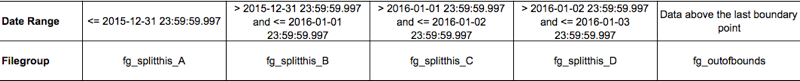

With my left-based partition function, here’s what the data assignment looks like:

Note that there is no boundary point mapped to fg_outofbounds

Our highest boundary point is 2016-01-02 23:59:59.997, which is mapped to the filegroup to its LEFT– fg_splitthis_D.

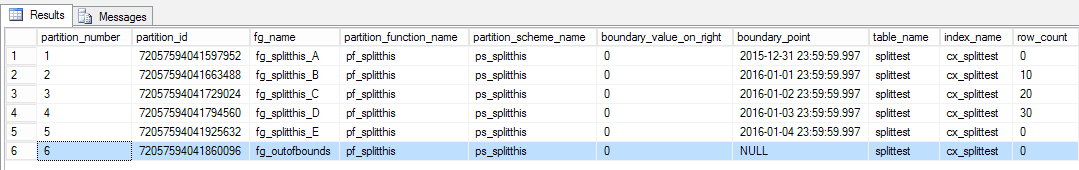

I create a table on the partition scheme and insert some rows for January 1, January 2, and January 3. Here’s what the metadata looks like after that.

Now I SPLIT

I want to add a new partition, assigned to a new filegroup. I add the filegroup and file to my database.

To split, I need to tell SQL Server where to put the data for the next boundary point I define. I do this with ALTER PARTITION SCHEME and setting the NEXT USED property. Then I can add my boundary point:

ALTER PARTITION SCHEME ps_splitthis

NEXT USED fg_splitthis_E;

GO

ALTER PARTITION FUNCTION pf_splitthis() SPLIT RANGE ('2016-01-04 23:59:59.997');

GO

After making this change, here’s what my filegroup mapping looks like now:

Our new boundary point specifies that data for January 4 goes on fg_splitthis_E.

Data above the last boundary point (which is now the 4th) still goes to fg_outofbounds

I can continue to set NEXT_USED and split, but that won’t change the filegroup assignment for data above the last boundary point in my LEFT based partition function. fg_outofbounds is where any data above whatever is the highest value boundary point will go. Its partition_id is not changing when I SPLIT.

Why do people care?

This might be very confusing if I’d happened to have named that filegroup fg_splitthis_20160104, or something that looked like it was assigned to a specific date.

Can I change fg_outofbounds to a different filegroup?

Here’s where it gets awkward. The only ALTER option for PARTITION SCHEME is to set NEXT USED. And next used only sets which filegroup will accept a new partition created by a boundary point. That doesn’t help us.

The only way I know of to change where data above the highest boundary point in a LEFT based partition scheme goes is to do something like this in an outage window:

- Make sure the partition using fg_outofbounds is empty. (If not, add the appropriate filegroup, etc)

- Create a new partition scheme on your partition function with all the filegroups the same, except for fg_outofbounds (which is wherever you want it to be)

- Create a new table that matches your old table (except for names) on the new partition scheme with matching indexes and constraints

- Switch all the non-empty partitions to the new table

- Make sure identities are properly seeded

- Rename everything

- Cross every finger and toe that I didn’t forget something on this list

That doesn’t sound like much fun. (If you know of a less painful way, let me know in the comments!)

And honestly, usually it’s not worth fixing. We probably shouldn’t be allowing data in outside the boundary points anyway.

But yeah, managing this isn’t so easy.

Takeaways: Be Careful When Designing Partitioning

It’s not actually so easy to change everything later. Be careful deciding how you want to use filegroups, and how many you want to manage. More info on making that decision is in my post here.